Introduction to MLOps in AIOps

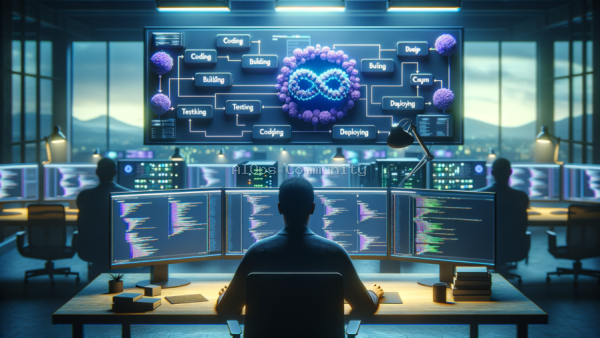

In the rapidly evolving landscape of artificial intelligence, MLOps and AIOps have emerged as pivotal methodologies. MLOps, or Machine Learning Operations, focuses on streamlining the lifecycle of machine learning models. Meanwhile, AIOps, or Artificial Intelligence for IT Operations, leverages AI to enhance IT operations. Integrating MLOps into AIOps can significantly improve the efficiency and reliability of IT processes.

This tutorial will explore how to build a continuous integration pipeline that seamlessly integrates MLOps within an AIOps framework. For data scientists and MLOps engineers, this guide offers practical insights into enhancing model deployment efficiency.

By implementing a continuous integration pipeline, organizations can ensure that machine learning models are consistently updated, tested, and deployed without disrupting ongoing operations. This approach not only enhances operational efficiency but also optimizes resource utilization.

Understanding the Components of a Continuous Integration Pipeline

Before diving into the integration process, it’s essential to understand the components of a continuous integration (CI) pipeline. A CI pipeline typically consists of several stages, including source control, build, test, and deploy. Each stage plays a critical role in ensuring that machine learning models are reliably integrated into the AIOps environment.

Source Control: This stage involves managing the source code of machine learning models. Version control systems like Git are commonly used to track changes, manage collaboration, and revert to previous versions if necessary. For MLOps in AIOps, source control ensures that all stakeholders have access to the latest model code.

Build: In the build stage, the source code is compiled, and dependencies are resolved. This stage prepares the machine learning model for testing by ensuring that all necessary components are in place. Automated build tools like Jenkins can streamline this process, reducing manual intervention and errors.

Test: Testing is crucial to verify that the machine learning model behaves as expected. Various testing frameworks can be employed to conduct unit, integration, and performance tests. Testing in a CI pipeline helps identify issues early, reducing the risk of deploying faulty models.

Integrating MLOps into AIOps: Step-by-Step Guide

With a clear understanding of the CI pipeline components, we can now integrate MLOps into AIOps. This integration involves several key steps that ensure a seamless process.

Step 1: Define the Integration Strategy

Before starting the integration, it’s crucial to define a strategy that aligns with your organization’s goals. Consider the specific requirements of your AIOps framework and the machine learning models in use. A well-defined strategy will guide the integration process and mitigate potential challenges.

Step 2: Automate Model Training and Validation

Automation is at the heart of MLOps. Automate the training and validation of machine learning models to ensure they are always up-to-date with the latest data. This can be achieved using tools like Kubeflow or MLflow, which facilitate the orchestration of machine learning workflows.

Step 3: Implement Continuous Testing

Continuous testing is essential for maintaining model reliability. Implement automated tests that validate model accuracy, performance, and scalability. Tools like TensorFlow Extended (TFX) can be integrated into the testing phase to streamline this process.

Step 4: Deploy with Confidence

Once models have passed all tests, they can be deployed into the AIOps environment. Use deployment tools such as Kubernetes to manage containerized applications, ensuring scalability and resilience. Continuous deployment practices enable seamless updates without disrupting operations.

Best Practices and Common Pitfalls

While integrating MLOps into AIOps offers numerous benefits, it’s essential to follow best practices to maximize success. Regularly review and update your CI pipeline to accommodate new tools and technologies. Engage cross-functional teams to foster collaboration and innovation.

Common pitfalls to avoid include neglecting model monitoring and failing to maintain clear documentation. Monitoring is critical for detecting anomalies and ensuring models perform optimally in production. Comprehensive documentation supports knowledge sharing and eases onboarding for new team members.

Conclusion

Integrating MLOps within an AIOps framework through a continuous integration pipeline enhances model deployment efficiency and operational effectiveness. By following the steps outlined in this guide, data scientists and MLOps engineers can create a robust pipeline that supports the seamless integration of machine learning models into IT operations.

Embracing this approach not only optimizes resource utilization but also ensures that AI-driven insights are continuously refined and deployed, keeping your organization at the forefront of innovation.

Written with AI research assistance, reviewed by our editorial team.